Overview

Moe's Southwest Grill partnered with our HCI team to answer a strategic question: should Moe's implement voice ordering, and if so, what should that experience look like? Rather than assuming voice interaction was inherently valuable, we approached the project as a research problem first - investigating customer behavior, restaurant context, employee realities, and the conditions under which voice ordering might or might not make sense.

The project took place over a semester in an HCI research methods course, but the work was grounded in a real industry collaboration. Our team used a broad mix of methods, including surveys, interviews, field observation, participatory design, competitive analysis, and expert evaluation, to move from an open-ended business question toward more concrete design guidance and prototype direction.

By the end of the semester, we delivered a set of findings and recommendations to the Moe's team, along with supporting artifacts including a demo scenario, design guidelines, and a functioning voice interaction prototype.

My Contribution

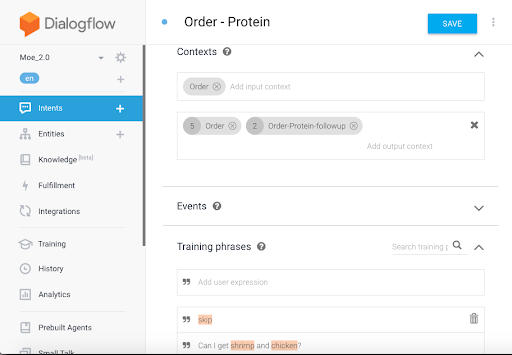

I contributed across the project, but my strongest ownership was in facilitation-heavy and synthesis-heavy work: guiding discussions, planning agendas, scripting and facilitating the participatory design workshop, managing communication with our professor, TA, and industry partner, and delivering the final presentation. I also independently built the final AI-driven voice prototype in Dialogflow, which became one of the project’s most tangible deliverables.

Research and Framing

Because the prompt was so open-ended, we started by mapping everything we needed to understand: customer behavior in-store, operational realities behind the counter, how voice systems succeed or fail in service contexts, and what kinds of ordering moments might benefit from voice interaction. This early framing work helped us separate the project into more manageable research paths rather than jumping prematurely to a solution.

Early framing work helped us turn a vague partner question into a more structured set of research questions

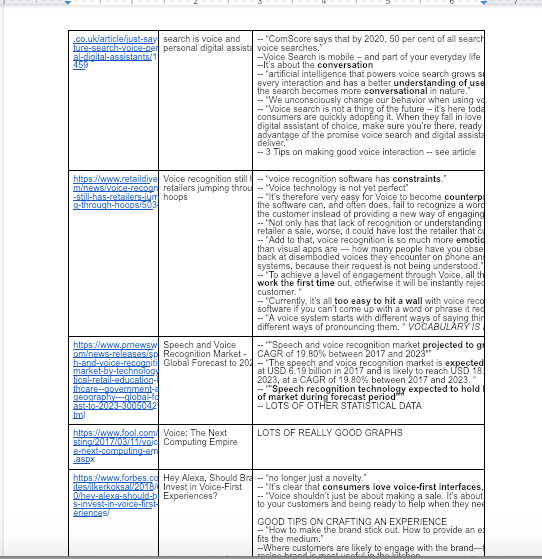

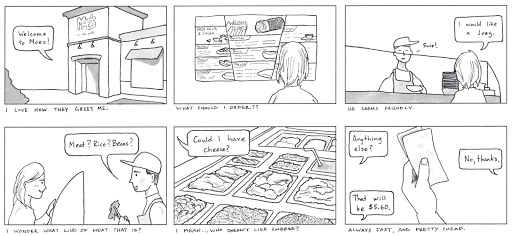

From there, we combined desk research with firsthand observation. We gathered scholarly and industry references, organized them into a shared team repository, and visited a Moe's location to observe customer behavior, staff interactions, and potential points where voice interaction might fit - or create friction. This gave us both higher-level context and grounded service insight.

Desk research and field observation gave us both a structured knowledge base and a grounded view of how service interactions actually played out in-store.

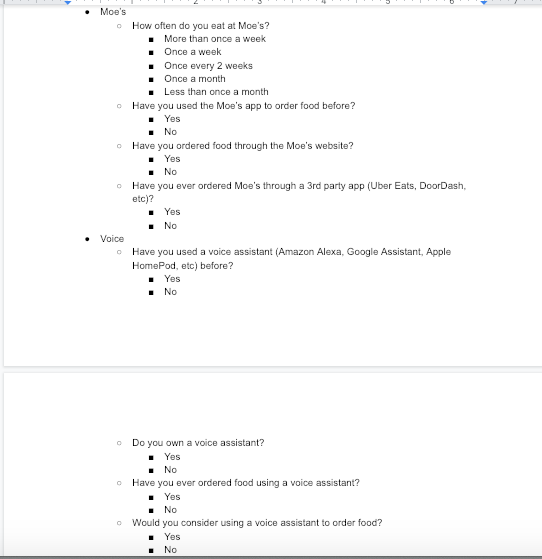

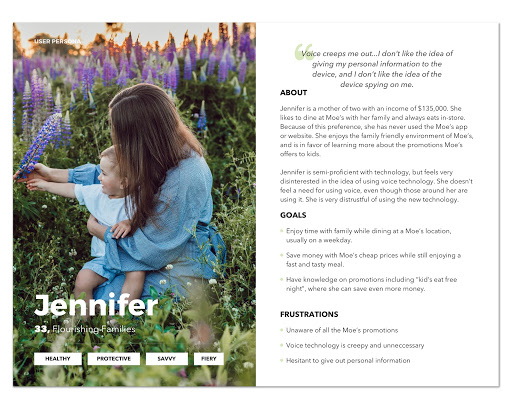

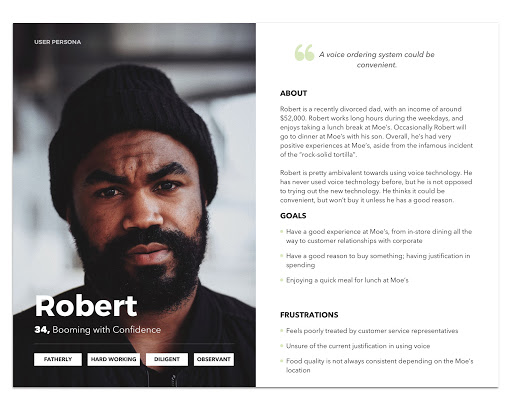

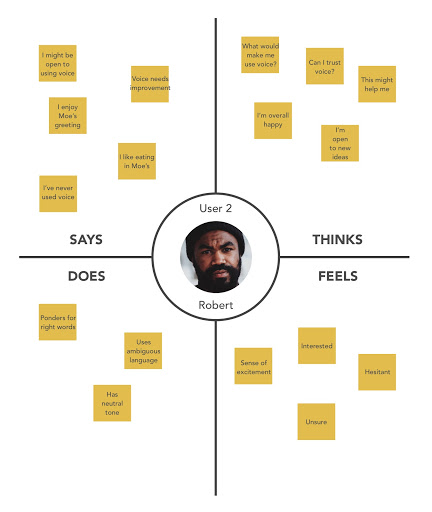

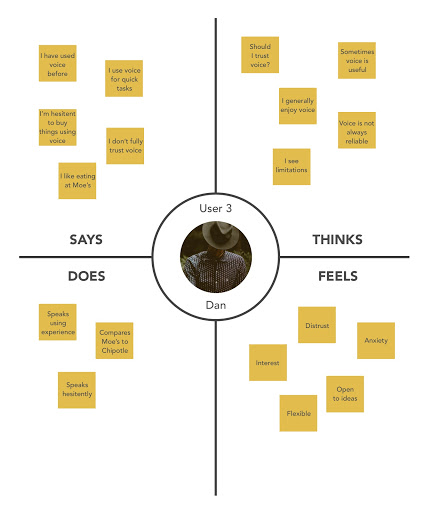

We then moved into customer-facing research. A short survey gave us initial quantitative data and helped screen for follow-up interviews, while interviews deepened our understanding of user perceptions, concerns, and expectations around ordering. We translated that material into personas, empathy maps, and scenario-based artifacts that made the emerging user landscape easier to communicate both within the team and back to the partner.

We also used competitive analysis to understand how adjacent voice systems were already being framed in practice. Looking at examples like the Starbucks voice assistant helped us identify both the promise and the limits of current voice ordering experiences.

Initial survey work gave us both directional quantitative signals and a way to recruit stronger interview participants

These artifacts helped us synthesize and communicate what we were learning about different user needs and expectations

Competitive analysis helped us study how similar voice experiences were already being structured in the wild

At a key midpoint, we presented our findings and proposed directions to the Moe's team at their corporate office. That conversation surfaced an important shift: Moe's was about to undergo a major rebrand. Because the menu, store environment, and overall experience were changing, we revisited the field context by observing a test location and grounding the next phase of the project in the updated brand reality.

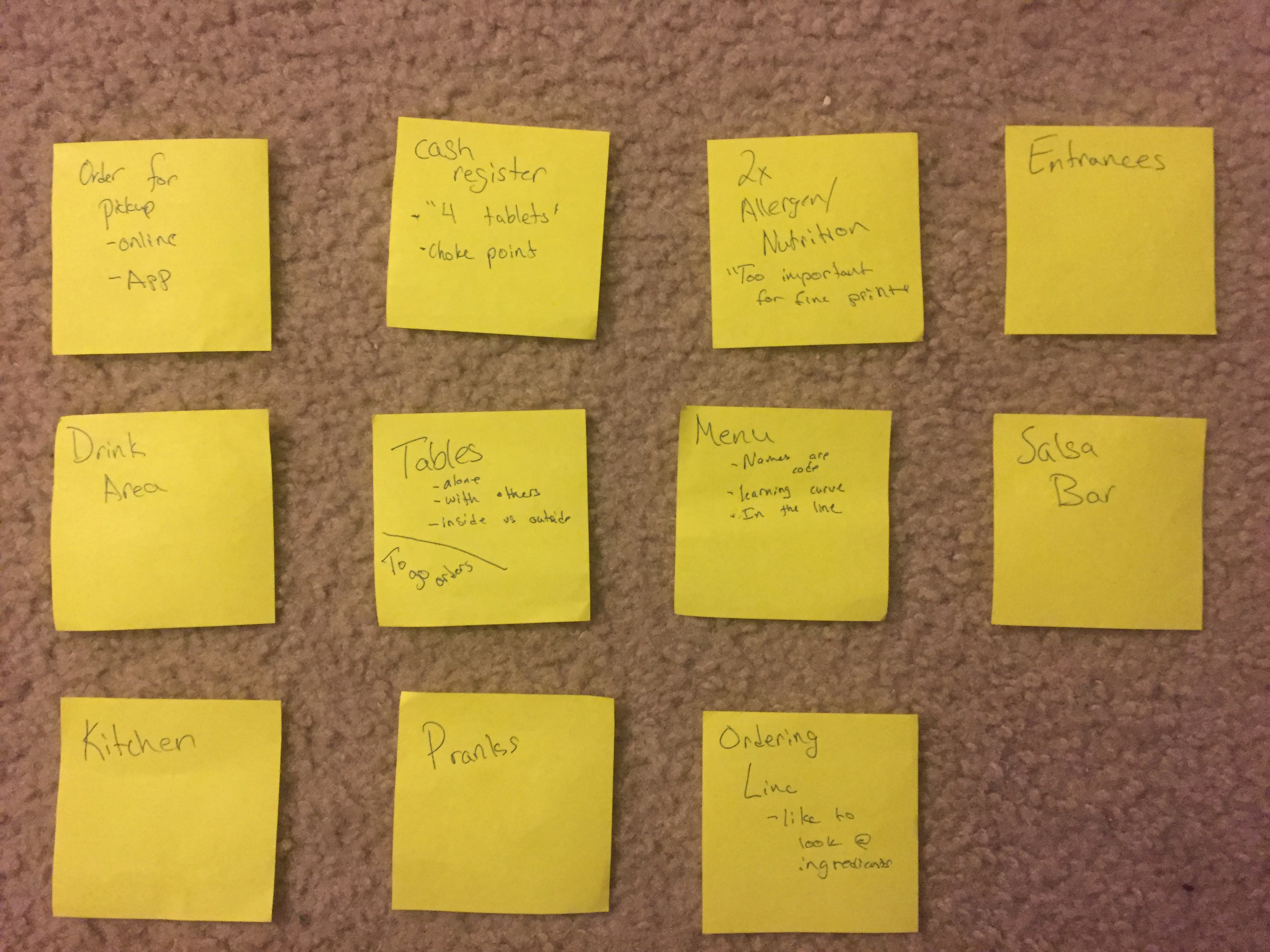

The rebrand changed the service environment enough that we needed to re-ground the project in the updated in-store experience

Participatory Design and Synthesis

At this stage, the project shifted from understanding the current experience to actively exploring what a future voice interaction might need to support. Participatory design became especially valuable because it let us move beyond abstract assumptions and surface more concrete expectations, behaviors, and design ideas with real participants.

To prepare for this phase, we returned to the rebranded Moe's for additional observation and interviewed an employee to better understand how the new environment changed service flow. Those insights fed directly into a participatory design workshop that I largely scripted and led as facilitator. My role was to shape the flow of the workshop, guide transitions, and help participants elaborate on their thinking in ways that would be useful for later synthesis.

Additional fieldwork in the rebranded store helped us prepare the workshop around the updated service experience

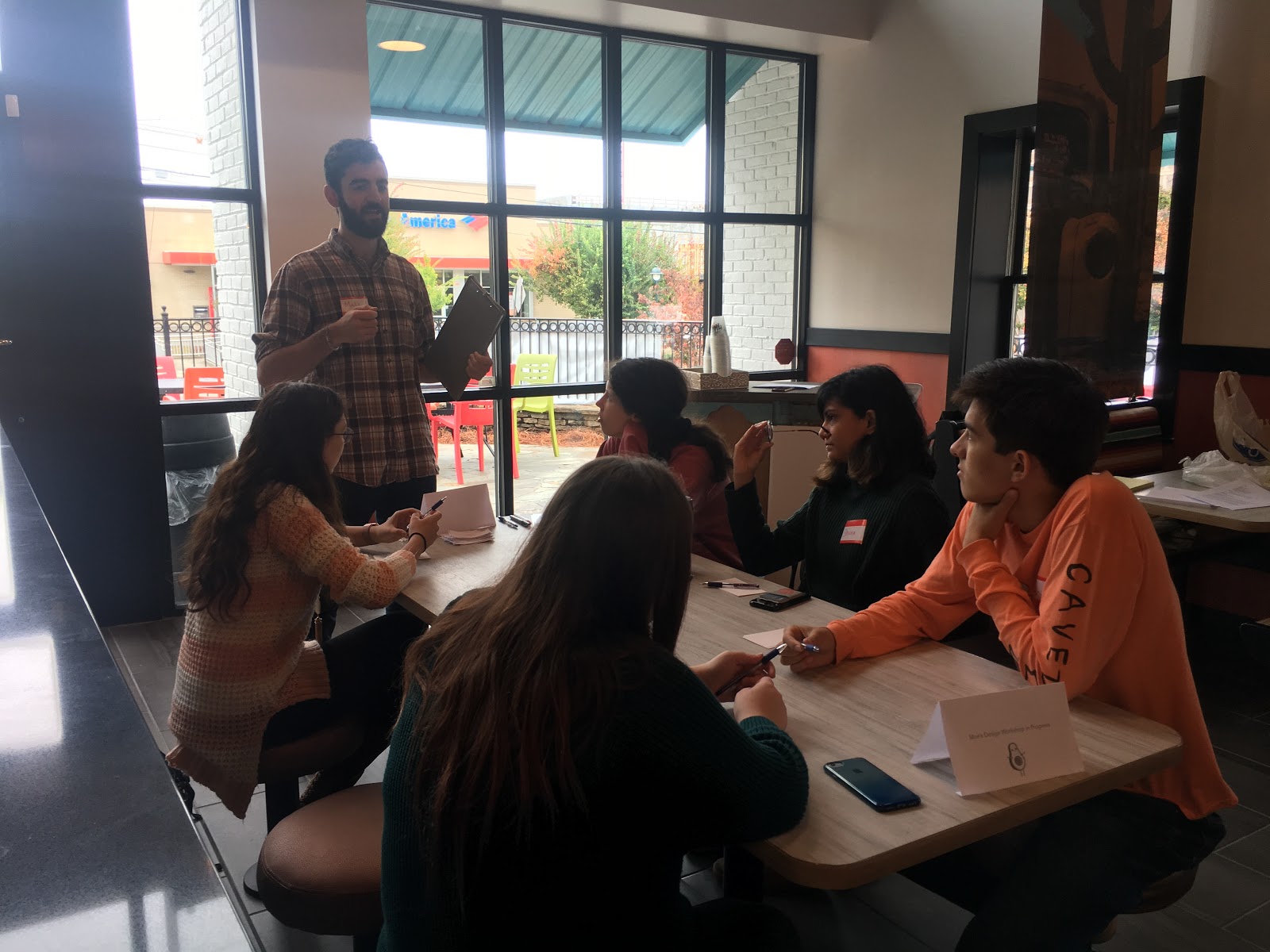

I led the workshop flow, helping participants move through activities and articulate their expectations in detail

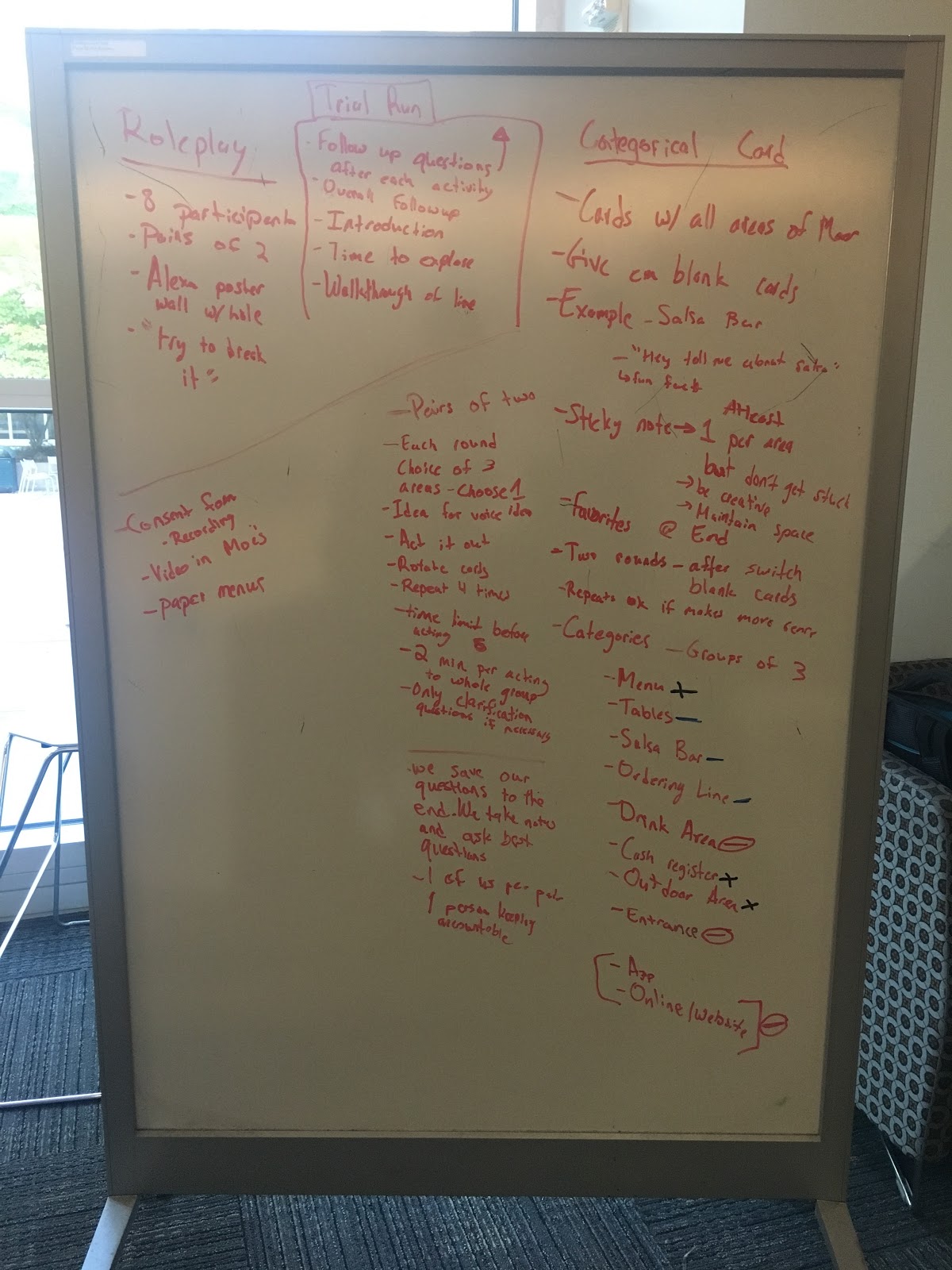

The workshop activities relied on roleplay, with participants rotating between acting as customers and acting as the voice ordering system. This format helped surface conversational expectations, breakdowns, and moments of friction more effectively than a simple discussion would have. Before running the live session, we tested and refined the activities with fellow HCI students.

We designed and trial-ran workshop activities before the live session to make sure they produced useful, concrete feedback

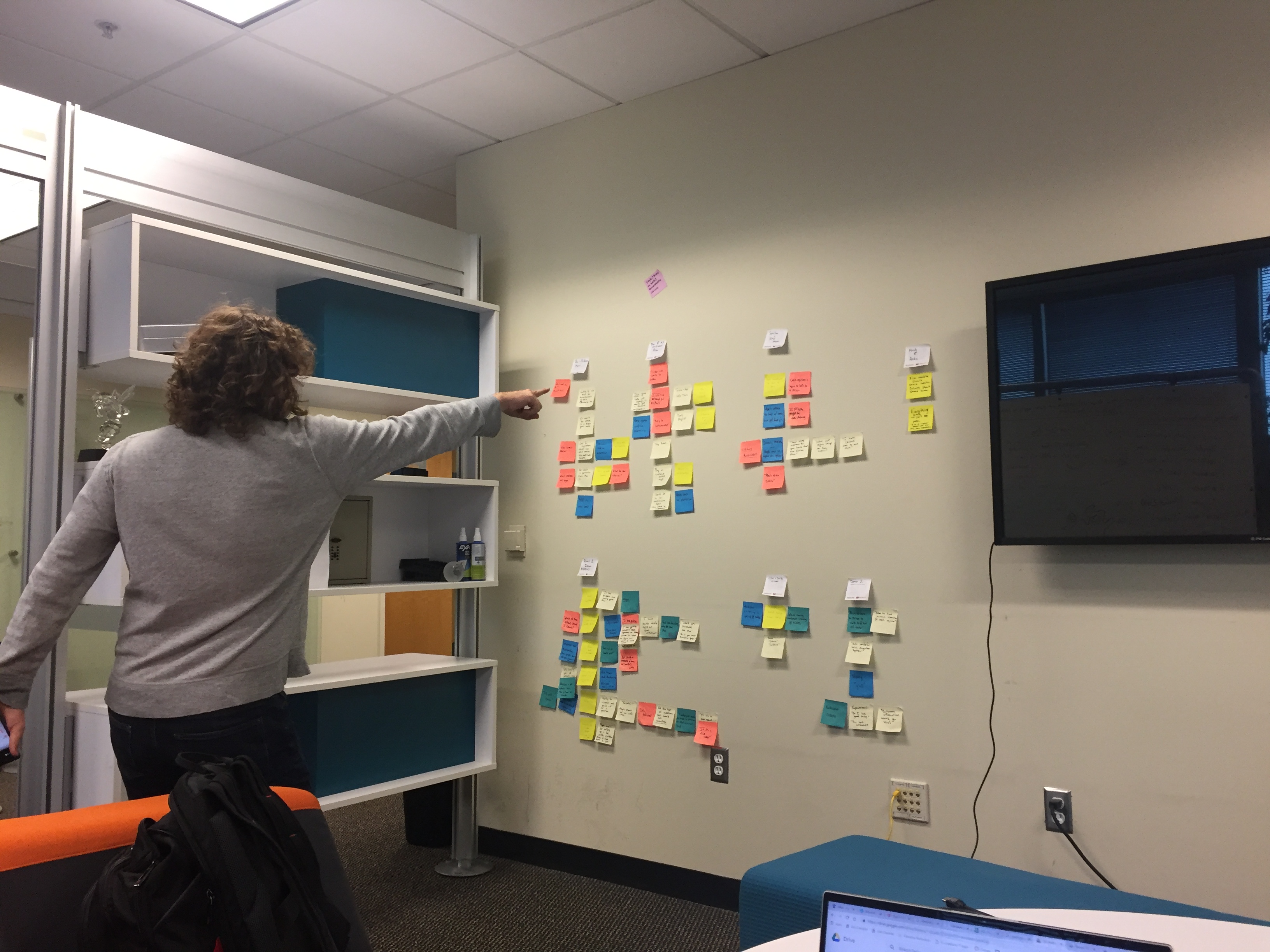

The workshop generated rich feedback on both the new Moe's brand experience and the expectations people brought to voice interaction in a restaurant context. Afterward, we reviewed the recordings and organized the findings into a large affinity map that became the basis for the project’s final direction. This synthesis phase helped us move from broad research evidence to clearer design implications and deliverables.

The live workshop created a space for participants to act through the experience rather than only describe it

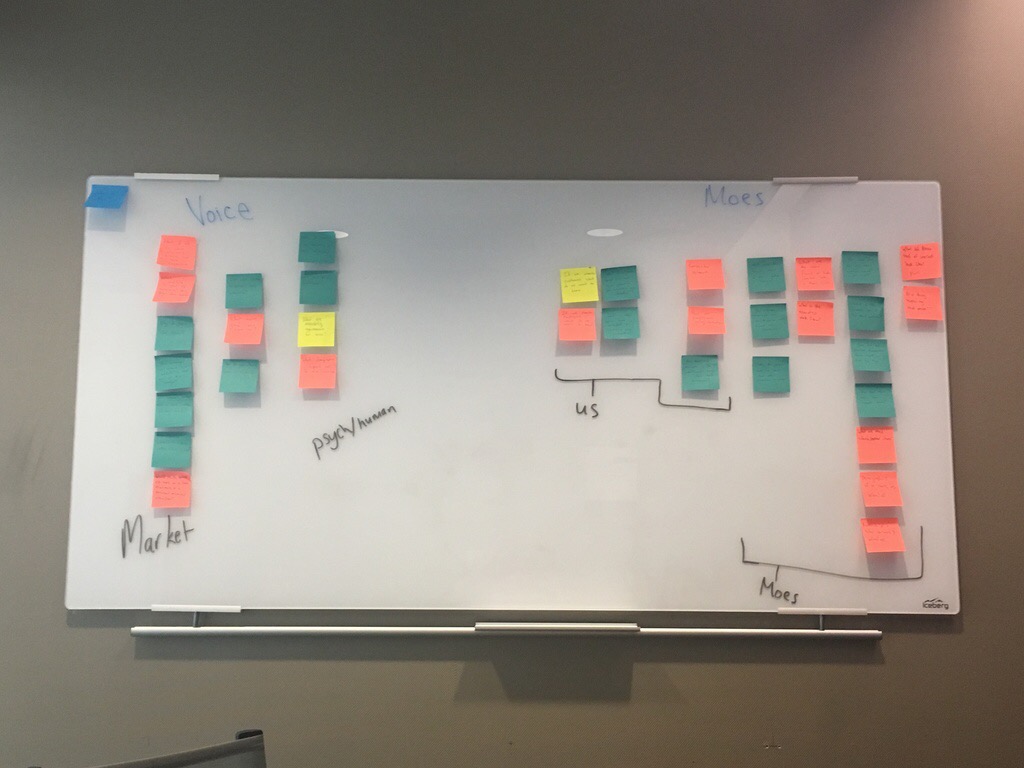

Post-workshop synthesis turned a large volume of qualitative input into clearer themes, design implications, and next-step decisions

Prototype, Evaluation, and Outcome

The final phase of the project focused on translating the research into deliverables Moe's could actually react to and potentially build from. That meant moving from abstract findings toward concrete guidance, prototype scenarios, and a working proof of concept.

We first distilled the research into a set of design guidelines summarizing the most important implications for future voice experience work. Because those guidelines are restricted by NDA, they cannot be shown here in full. To make the recommendations more tangible, we also created a scenario-driven demo of an idealized voice ordering interaction using BotSociety.

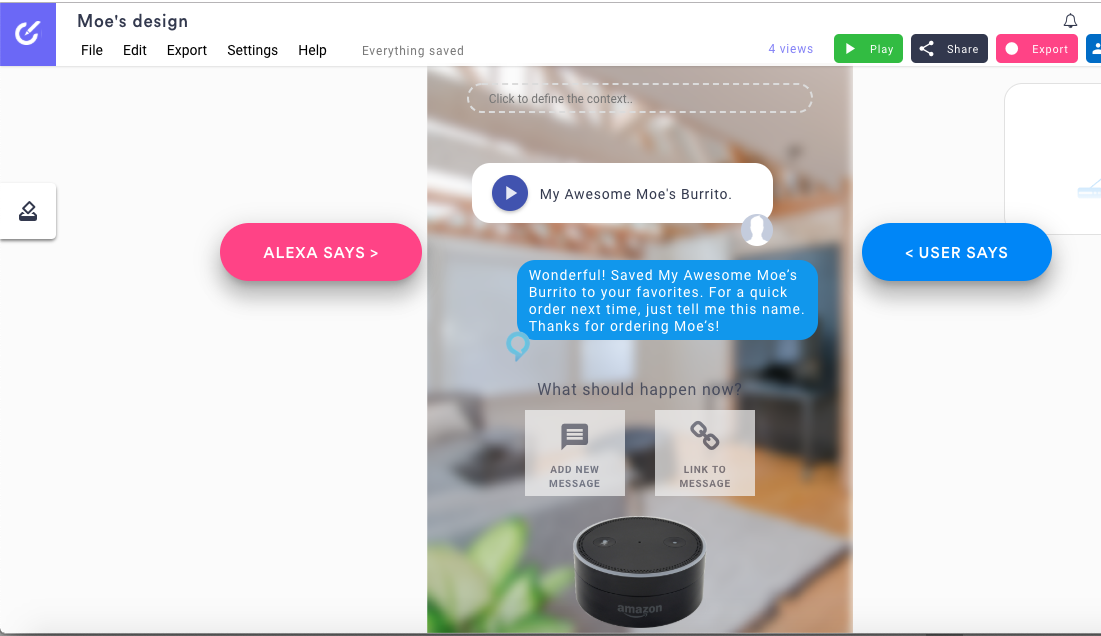

BotSociety helped us turn research-driven guidance into a more concrete example of what the interaction could feel like

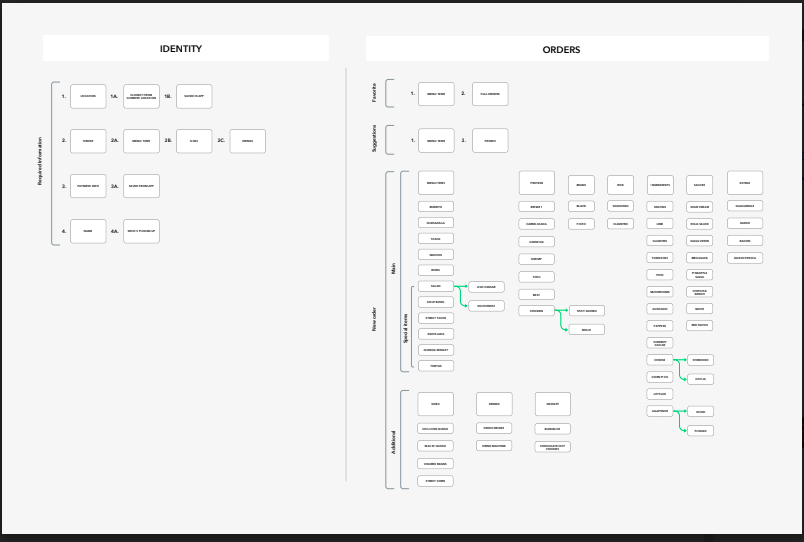

A key step was translating the Moe's menu and ordering flow into a voice-friendly information architecture. We mapped the structure of the menu, clarified how ordering should progress conversationally, and used that structure as a foundation for both the demo scenario and the final prototype.

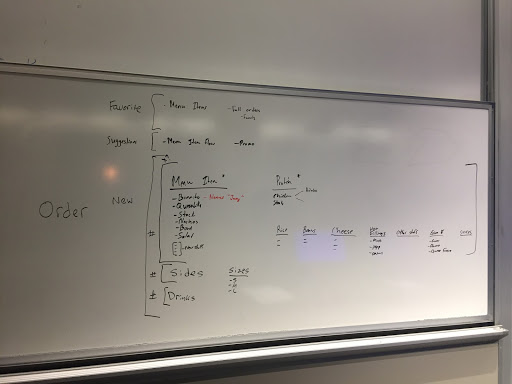

We translated Moe's menu and ordering flow into a voice-friendly information architecture that could support the prototype

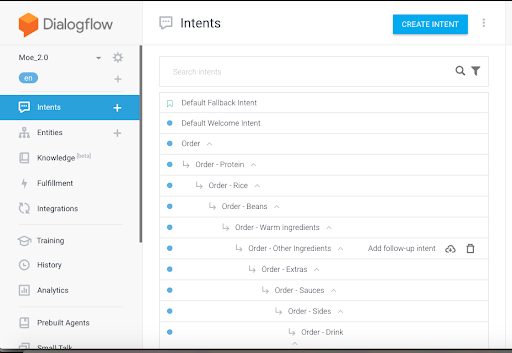

I then independently built the final AI voice prototype in Dialogflow. This required learning the platform, programming the conversational flow, and translating the information architecture into a functioning interaction model. The result was not a polished production system, but it was a meaningful proof of concept and one of the project’s strongest final deliverables.

The Dialogflow prototype turned our research and information architecture into a working conversational proof of concept

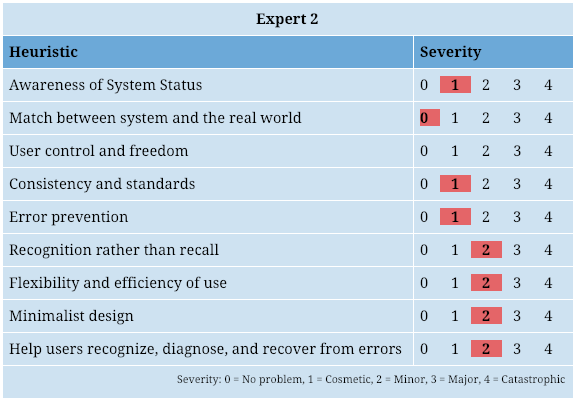

Once the prototype was working, we designed evaluation criteria and brought in expert reviewers with deep experience in voice and audio interaction, along with lighter user feedback sessions for non-expert perspectives. We then packaged the research findings, design guidance, prototype, and evaluation results into a final presentation for the Moe's team. The project ended not with a single “answer,” but with a grounded recommendation set and a more concrete foundation for future exploration.

Expert evaluation helped us pressure-test the prototype and the broader voice interaction direction

The final handoff brought together research findings, design guidance, prototype work, and partner-facing recommendations

Outcome

This project was less about proving that voice ordering definitely should exist and more about understanding the conditions under which it might succeed. For me, it was an important exercise in running broad research, facilitating participatory work, and turning a messy service question into a working prototype and concrete deliverables for the partner.

Gallery

These images are less about the formal argument of the case study and more about the human texture of the project - the team sessions, workshop moments, notes, and synthesis work that made a long semester collaboration feel real.

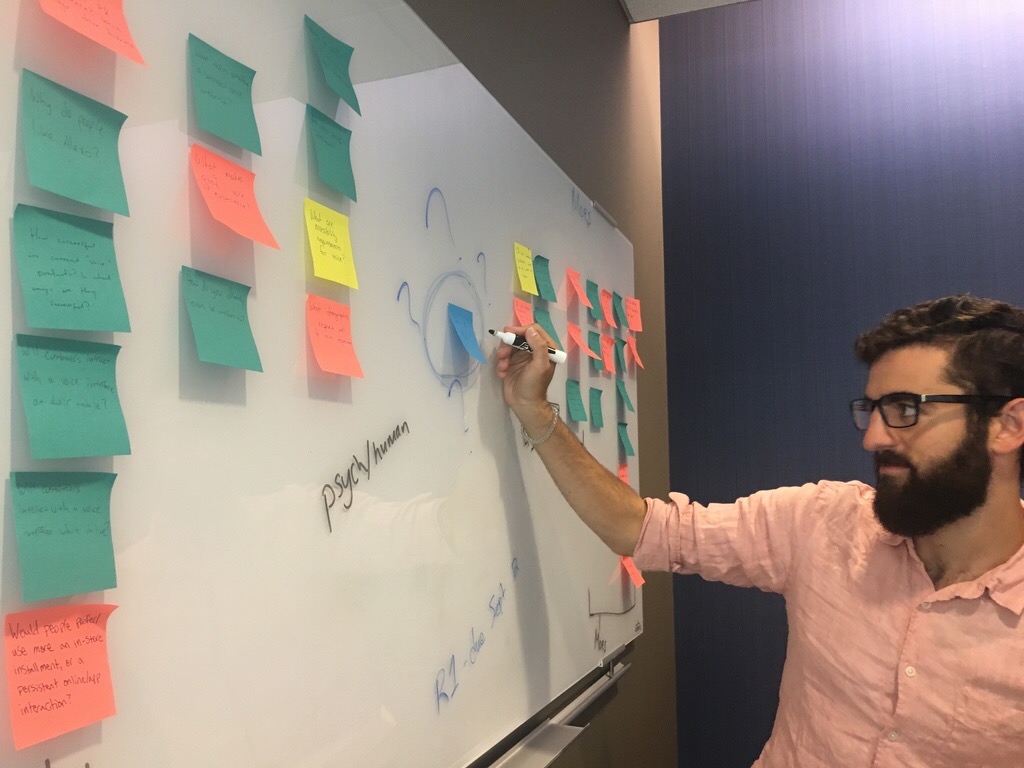

One of our earliest team working sessions as we began framing the project

Experiencing the product and service firsthand helped ground our later research and design conversations

Participants and team members working through one of the participatory design activities

Synthesis was a major part of the project, turning a large volume of research input into decisions and deliverables